Background

A while ago, I wrote a post: Azure DevOps Multi-Stage pipelines for Enterprise AKS scenarios. The idea was having a platform team that enforces best practices, security and other compliance aspects to Kubernetes platform and allows one ore more workload-teams (i.e., product teams) build and deploy their workload into the cluster. The previous post suggested a practical way that is based on GitOps.

In a nutshell, the platform team keeps a Git repository as their source of truth – where they define workload team/product details with the shape of Infrastructure as code, in YAML files for instance. When they on-board a new workload into the platform, they write the specifics of the workload into a new YAML file and a pipeline ensures the changes in the repository is reflected into the underlying infrastructure – essentially creates a namespace in Kubernetes cluster, create a service account with custom role binding for deploying workload manifests (Ingress, Services, Deployments, Pods etc.), create a Project in Azure DevOps, creates and wires an Environment that points to the namespace in the cluster. Therefore, one the pipeline completes, the workload/product team gets a ready to run Project in Azure DevOps with an environment correctly configured for them -which dramatically reduces the complexities setting up this manually and manage the changes over time.

The earlier post was considered Azure DevOps and Azure Pipelines as tools to demonstrate this. This post will help one achieve the similar scenario using GitHub repositories and GitHub Actions.

Platform state

We start with a git repository (an example repository can be found in here) where the platform team can define the state of the workload profiles (with YAML based Infrastructure as code), owns the repository (only their team can approve pull-requests for instance), with a GitHub action workflow associated that idempotently keeps the underlying infrastructure synced.

The folder-structure for the workload profiles looks like following:

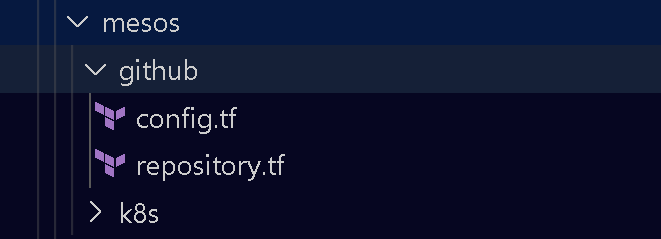

For instance, two workloads/products (i.e., mesos and sphere) are defined with two folders with the same name. Let’s look into the files and sub-folders inside an individual workload folder.

The sub-folder k8s gives away it’s purpose, this is where we define the namespace, service accounts and roles for the specific workload/product. You can check the content of these YAML into the example repository mentioned above – however, they are just typical Kubernetes manifests.

Next to that, we also have a sub-folder named github. This is where we will be writing Terraform files to provision new GitHub repositories for the workload team, creating GitHub action environments and setting up the secrets that allows product teams to deploy directly into their namespace created earlier using the service account that was designated for their pipelines/workflows.

If we take a look into the config.tf:

# Make sure az cli login before this.

terraform {

backend "azurerm" {

resource_group_name = "platform-resources"

storage_account_name = "terraformstate"

container_name = "k8s-github-governance"

key = "prod.mesos.tfstate"

}

}

terraform {

required_providers {

azurerm = {

source = "hashicorp/azurerm"

version = "=2.46.0"

}

github = {

source = "integrations/github"

version = "~> 4.0"

}

}

}

provider "azurerm" {

features {}

}

provider "github" {}

Similarly the repository.tf looks following:

data "github_user" "current" {

username = "moimhossain"

}

resource "github_repository" "mesosworkload" {

name = "mesos-workload"

description = "Kubernetes workload repository"

visibility = "public"

auto_init = true

}

resource "github_repository_environment" "production" {

environment = "Production"

repository = github_repository.mesosworkload.name

reviewers {

users = [data.github_user.current.id]

}

deployment_branch_policy {

protected_branches = true

custom_branch_policies = false

}

}

resource "github_actions_environment_secret" "k8s_token" {

repository = github_repository.mesosworkload.name

environment = github_repository_environment.production.environment

secret_name = "k8s_token"

plaintext_value = filebase64("./kubeconfig")

}

With these folder structure and files we can now come up with a GitHub workflow that will do the following:

- Apply all the manifest to the Kubernetes, hence, creating (if not exists) namespaces for the workload, creating service accounts, role and role bindings.

- Uses terraform to create a repository for workload team. Ensure the following exists into the repository

- An environment

- A secret associated to the environment that contains the certificate of the service-account created for the workload

The workflow would look like following:

name: Governance

on:

push:

branches: [ main ]

pull_request:

branches: [ main ]

workflow_dispatch:

jobs:

governance:

runs-on: ubuntu-latest

environment: Production

steps:

- uses: actions/checkout@v2

with:

fetch-depth: 2

- name: Azure Login

uses: Azure/login@v1

with:

creds: ${{ secrets.AZURE_CREDENTIALS }}

- name: Apply platform governance

env:

ARM_CLIENT_ID: ${{ secrets.CLIENT_ID }}

ARM_CLIENT_SECRET: ${{ secrets.CLIENT_SECRET }}

ARM_TENANT_ID: ${{ secrets.TENANT_ID }}

ARM_SUBSCRIPTION_ID: ${{ secrets.SUBSCRIPTIONID }}

run: |

export GITHUB_TOKEN=${{ secrets.PAT }}

az account set --subscription ${{ secrets.SUBSCRIPTIONID }}

az aks get-credentials --resource-group kubernetes-resources --name k8s-platform-cluster --overwrite-existing

cd src

chmod +x ./do.sh

chmod +x ./generate-kubeconfig.sh

./do.sh ${{ github.sha }}

There are two bash scripts that does invoke the Kubernetes API and applies Terraform- which you can see directly into the example repository.

Workload repository

Once a workload repository is provisioned through the above process, workload team can now create their own GitHub action workflow. They only need to point to the environment that is already provisioned for them with all the secrets configured in place. They can immediately start deploying their Kubernetes manifests to the designated namespaces. Here’s an example of such workflows:

name: Deployment

on:

push:

branches: [ main ]

pull_request:

branches: [ main ]

workflow_dispatch:

jobs:

deploy:

runs-on: ubuntu-latest

environment: Production # Here, we are targeting the environment here

steps:

- uses: actions/checkout@v2

- name: Deploy workload

run: |

mkdir $HOME/.kube/

echo "${{ secrets.K8S_TOKEN }}" | base64 -d > $HOME/.kube/config

# The following statement would work and prove that the connectivity is good

# Therefore, workload can be applied with Kubectl apply.

kubectl get all -n mesos

kubectl get serviceaccounts -n mesos

# This following however, would fail if uncommented

# Because the workload team can issue command that goes beyond their namespace.

# kubectl get nodes

Wrap-up

That’s pretty much it. The idea of this post is not to take it a literal solution (of course, you are more than welcome if it fits your scenario), but to take few ideas (possibly some codes too) and build your own orchestrations. Like most of my other posts and accompanied code samples, the source codes are licensed with MIT and you are absolutely free and welcome to use it anyway you find it useful. Any acknowledgement of usefulness is much appreciated.

Enjoy!